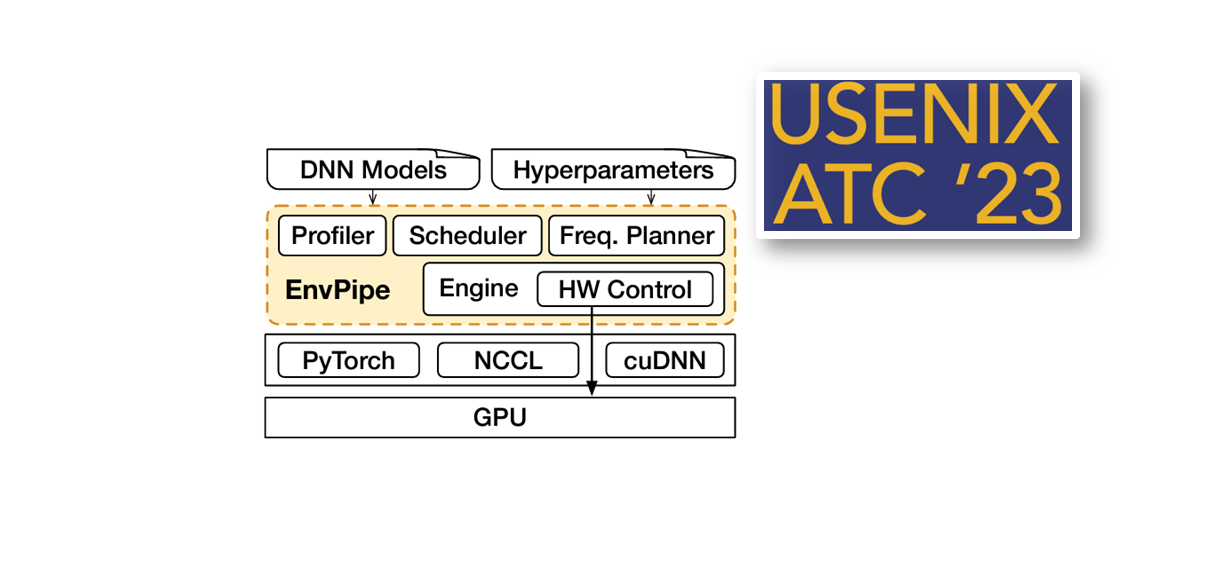

EnvPipe: Performance-preserving DNN Training Framework for Saving Energy

Abstract: Energy saving is a crucial mission for data center providers. Among many services, DNN training and inference are significantcontributors to energy consumption. This work focuseson saving energy in multi-GPU DNN training. Typically, energysavings come at the cost of some degree of performancedegradation. However, determining the acceptable level ofperformance degradation for a long-running training job canbe difficult. This work proposes ENVPIPE, an energy-saving DNN training framework. ENVPIPE aims to maximize energy saving while maintaining negligible performance slowdown. ENVPIPE takes advantage of slack time created by bubbles in pipeline parallelism. It schedules pipeline units to place bubbles after pipeline units as frequently as possible and then stretches the execution time of pipeline units by lowering the SM frequency. During this process, ENVPIPE does not modify hyperparameters or pipeline dependencies, preserving the original accuracy of the training task. It selectively lowers the SM frequency of pipeline units to avoid performance degradation. We implement ENVPIPE as a library using PyTorch and demonstrate that it can save up to 25.2% energy in singlenode training with 4 GPUs and 28.4% in multi-node training with 16 GPUs, while keeping performance degradation to less than 1%.